Three models.

One physics.

Each model solves a distinct problem in computational biology — built from scratch, without borrowing pretrained encoders or inheriting the wrong inductive biases.

The first open foundation model for binding prediction across all five molecular modalities — protein, RNA, DNA, small molecule — built entirely from scratch. One universal atom representation. Shared encoder weights. No pretrained encoders borrowed.

A protein carbon and an RNA carbon pass through identical learned transformations. This is not a design constraint — it is the entire point.

X-modal Unified Zero-shot Universal aptamer language model for de novo DNA and RNA aptamer design against any protein target — no SELEX experimental data required.

Powered by FEBI (Fundamental Encoding Block Intelligence) and RJ (Relational Junction) — proprietary blocks for sequence context and structural graph propagation. A built-in GER loop refines generation via REINFORCE toward lower Kd.

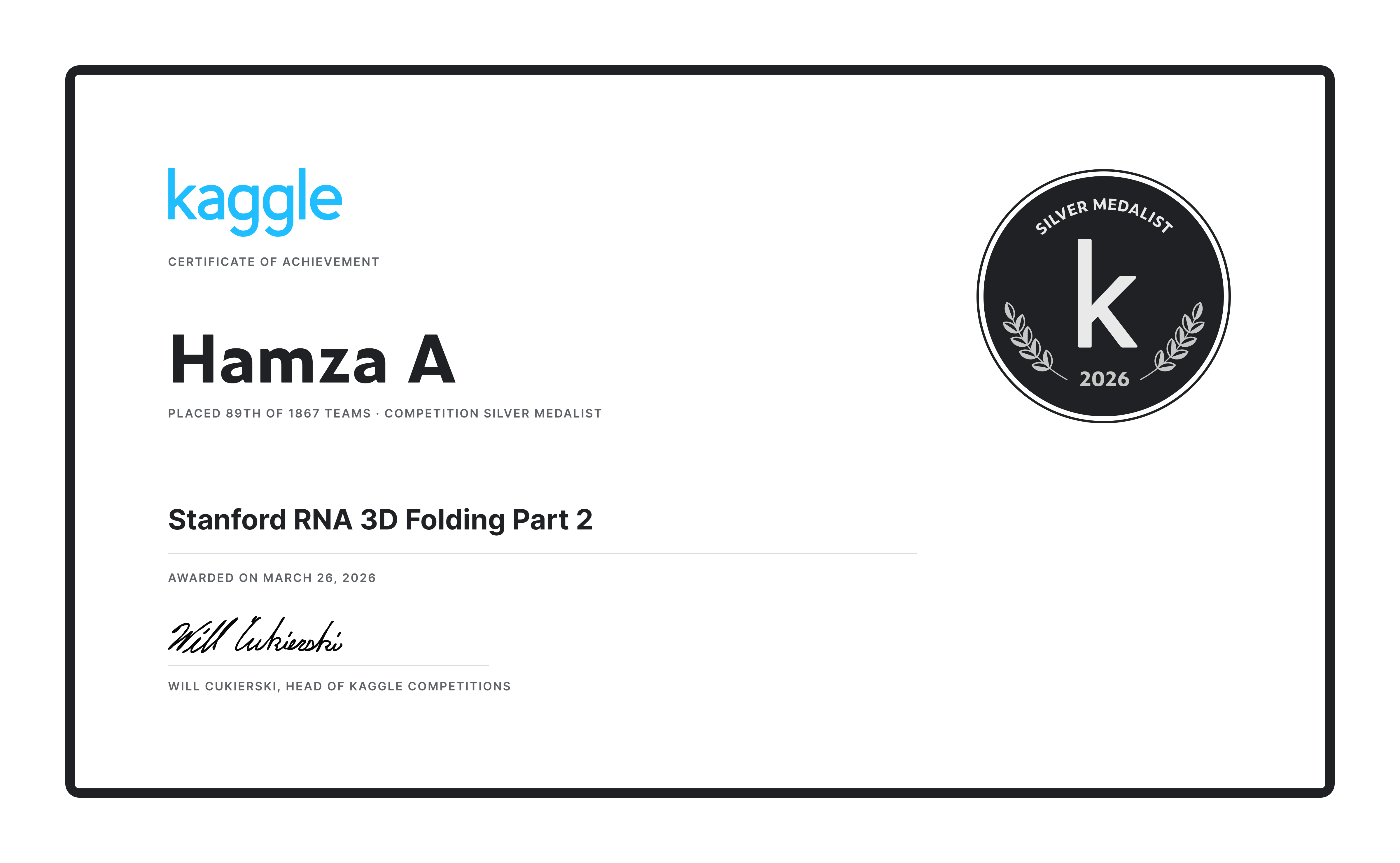

RNA 3D structure prediction model — codenamed RNX during development and competition. Competed in the Stanford RNA 3D Folding Part 2 Kaggle challenge, placing 89th out of 1,867 teams worldwide, earning a Silver Medal — awarded March 26, 2026.

RNJ is the structural counterpart to XUZU: where XUZU uses dot-bracket secondary structure as input context, RNJ predicts the full 3D atomic coordinates that define RNA tertiary fold and binding geometry.